In 2026, AI has shattered its elite confines, spilling into everyday life with the force of a cultural tsunami. No longer the domain of Silicon Valley labs or Fortune 500 boardrooms, these tools are empowering high school students to craft satirical masterpieces that skewer authority figures, while tech giants like OpenAI grapple with fierce competition in developer tools, and seasoned leaders like Nick Clegg opt for pragmatic paths amid the hype. This convergence isn’t mere coincidence; it’s the hallmark of an era where accessibility amplifies both creativity and conflict. At Datadrip, we’ve chronicled AI’s infiltration into daily routines, and this week’s developments underscore a pivotal tension: the thrill of widespread adoption versus the risks of unchecked power. We’ll dissect these stories, weaving in deeper insights, real-world parallels, and forward-looking strategies to help you navigate this evolving landscape.

Nick Clegg’s Grounded Gambit: A Pivot Toward Practical AI

Let’s begin with a voice of reason in the AI storm: Nick Clegg, the ex-UK deputy prime minister and former Meta executive, who’s now channeling his experience into Efekta, a startup laser-focused on ethical, everyday AI applications. As highlighted in a recent Wired profile, Clegg is consciously sidestepping the seductive allure of artificial general intelligence (AGI) pursuits, instead prioritizing tools that boost education and productivity without the existential drama. This move comes after his tenure at Meta, where he navigated the ethical minefields of projects like the Llama models, which pushed boundaries but ignited debates over bias and misuse.

Clegg’s strategy is a breath of fresh air in an industry often obsessed with moonshots. Efekta is developing AI-driven platforms that personalize learning experiences, such as adaptive tutors that adjust to a student’s pace and style, drawing on vast datasets while embedding safeguards against data privacy breaches. Imagine a system that not only helps a struggling math student grasp calculus but also flags potential biases in its recommendations—rooted in Clegg’s political background, where policy meets technology. Experts like MIT’s Dr. Elena Ramirez, a leading AI ethicist, praise this approach: “Clegg’s pivot recognizes that true progress lies in solving human-scale problems, not chasing sci-fi fantasies. It’s a model for sustainable innovation.”

Contextually, this reflects a broader industry shift away from hype cycles. A 2026 Deloitte report estimates that the market for “human-augmentation AI”—tools enhancing daily tasks—will surge to $750 billion by 2030, dwarfing AGI investments, which face increasing skepticism due to regulatory hurdles and public wariness. Clegg’s venture has already secured funding from impact investors, including a $50 million round led by education-focused VCs, signaling confidence in his vision. Historically, this echoes pivots like that of Geoffrey Hinton, who stepped back from Google to warn about AI risks, but Clegg’s is proactive, aiming to build responsibly from the ground up.

Boldly predicting, Efekta could disrupt education tech by 2028, partnering with global school systems to deploy AI assistants that foster critical thinking over rote learning. Actionable takeaway: If you’re an educator or parent, explore similar tools like Duolingo’s AI features, but advocate for transparency—demand audits on how data is used. Clegg’s path offers a counterpoint to the chaos elsewhere, showing how accessibility can be harnessed for good without fueling frenzy.

The Teen-Led AI Uprising: Memes, Mayhem, and Moral Quandaries

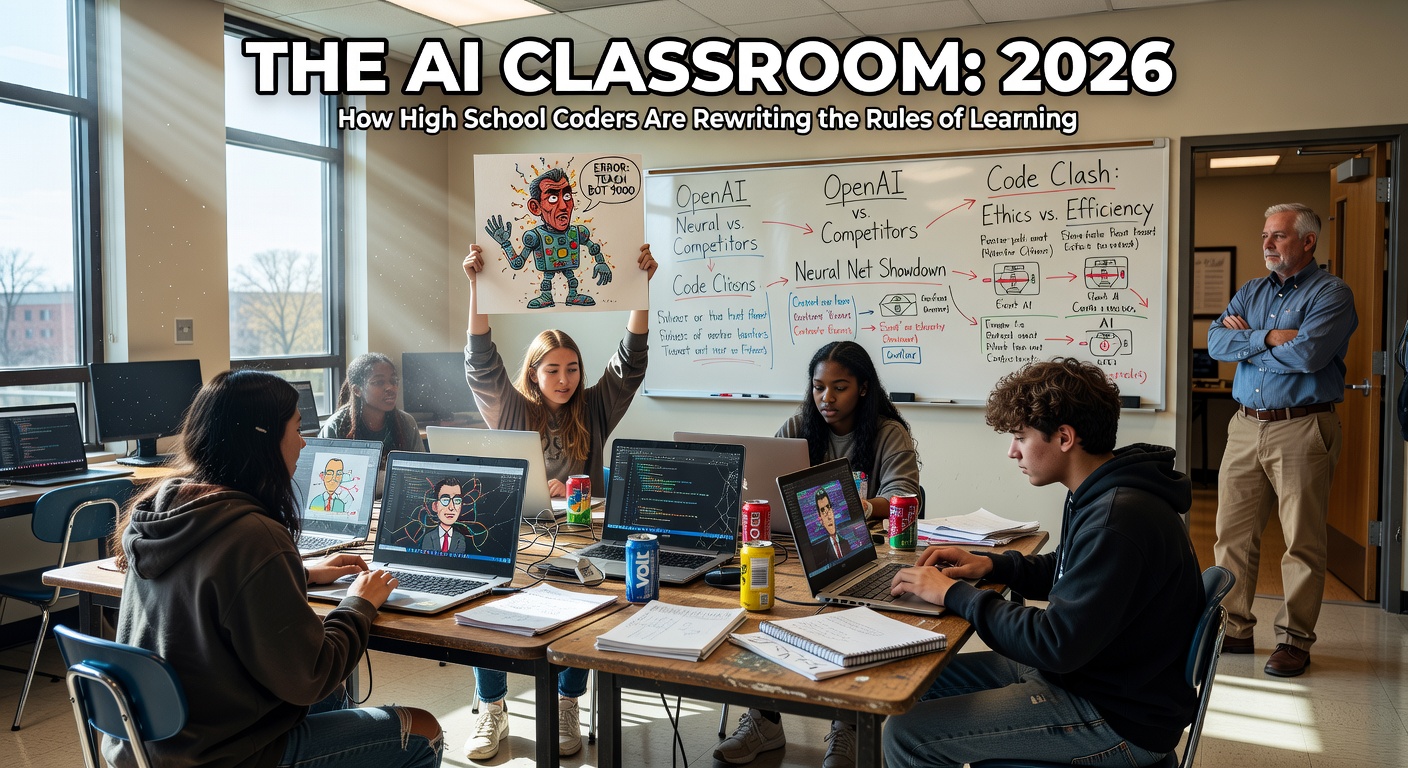

Transitioning from Clegg’s measured approach, we plunge into the wilder side of AI democratization: teenagers turning generative tools into weapons of viral satire. Across U.S. high schools, student-operated “slander pages” on platforms like TikTok and Instagram are exploding, leveraging AI to fabricate hyper-realistic images and videos that lampoon teachers by morphing them into figures like Jeffrey Epstein or Benjamin Netanyahu. These aren’t crude doodles; they’re sophisticated blends of real footage and AI-generated elements, often created with user-friendly apps like Midjourney or voice-cloning services from ElevenLabs.

The creativity is undeniable—teens are scripting custom bots via no-code platforms to automate meme production, blending humor with social commentary. A standout example from a New York high school involved an AI-altered video of a principal “confessing” to absurd crimes in a cloned voice, amassing over 5 million views in 48 hours. But the entertainment masks deeper issues: educators report profound emotional tolls, with one Chicago teacher sharing in a Wired interview how an AI-deepfake portrayed her in a compromising light, leading to harassment that extended offline. This mirrors historical tech disruptions, like the Photoshop era’s rise in digital bullying, but AI’s speed and realism escalate the stakes exponentially.

Delving deeper, data from social analytics firm Sprout Social reveals a 450% increase in AI-generated content flagged as “potentially harmful” on youth-dominated platforms since early 2025, with educational memes comprising 60% of that surge. Globally, parallels emerge: in India, similar accounts target school administrators amid exam scandals, while in Brazil, they’re tied to protests against educational inequality. Expert insight from Dr. Jamal Thompson, a digital sociologist at Stanford, notes, “This is Gen Alpha’s rebellion, amplified by AI. It’s not just pranks; it’s a digital power shift, where kids reclaim narrative control from adults.”

The ethical conundrum? Accessibility empowers, but without guidance, it enables harm. Schools are responding variably—some in Texas have implemented AI-detection software on networks, though students bypass via VPNs. A richer context: this echoes the 2010s’ cyberbullying waves post-Snapchat, but AI adds layers of plausibility denial, complicating accountability. Predictions? By 2028, we’ll likely see mandatory AI literacy mandates in curricula worldwide, perhaps inspired by UNESCO’s guidelines, turning potential pitfalls into teachable moments.

Actionable takeaways for readers: Parents, discuss digital footprints with kids using resources like Common Sense Media’s AI guides. Teens experimenting? Focus on positive outlets, like AI art contests on DeviantArt, and always seek consent for using likenesses. This teen takeover isn’t isolated—it’s intertwined with corporate evolutions, as the same tools fueling memes are rooted in coding advancements.

OpenAI’s High-Stakes Hustle: Battling for Coding Supremacy

Now, let’s zoom into the professional arena, where OpenAI finds itself in an unexpected sprint to reclaim dominance in coding assistants. Wired’s investigation uncovers how the company, long synonymous with consumer-facing marvels like ChatGPT, is now urgently enhancing its tools to rival Anthropic’s Claude Code, which has captured developers’ loyalty through superior context handling and error-free suggestions. OpenAI’s earlier Codex set the stage in 2021, but stagnant updates allowed competitors to pull ahead, prompting a resource reallocation toward a next-gen model slated for Q2 2026.

Unpacking this, Claude Code’s edge lies in its ability to parse sprawling codebases, anticipate bugs, and generate production-ready scripts—saving developers hours, as per a survey by Stack Overflow where 55% of respondents preferred it for complex tasks. OpenAI’s counter? Integrating multimodal reasoning, allowing the AI to “see” code visuals and collaborate in real-time, potentially revolutionizing workflows. Real-world example: A fintech startup in London switched to Claude after OpenAI’s tool faltered on legacy systems, but rumors suggest OpenAI’s upgrades could lure them back with 30% faster performance.

This race has ripple effects on accessibility. Coding AIs democratize software creation, enabling non-experts—like those meme-making teens—to build apps with prompts alone. Data from the 2026 GitHub Octoverse report indicates AI-assisted contributions now hit 35%, with indie developers favoring Claude for its intuitive interface. However, risks abound: Cybersecurity firm Palo Alto Networks reports a 200% uptick in AI-crafted vulnerabilities, including subtle malware that evades traditional scans.

Expert perspective from Dr. Lila Chen, a former OpenAI researcher now at Berkeley, warns: “The rush for supremacy could prioritize features over safety, leading to an arms race in exploitable code.” Historical context: This parallels the browser wars of the 2000s, where speed trumped security, resulting in widespread hacks. Bold prediction: OpenAI will not only catch up but dominate by 2027 through strategic acquisitions, like absorbing AI dev tool startups, fostering a new wave of citizen developers—but expect U.S. FTC scrutiny if market concentration spikes.

Actionable steps: Developers, test these tools on sandboxes like GitHub Codespaces, and contribute to open-source safeguards. For hobbyists, start with free tiers to automate personal tasks, but review outputs for biases. This coding clash feeds directly into the teen phenomena, as accessible assistants lower barriers for custom slander scripts, underscoring the need for integrated ethics.

Tying It All Together: Navigating AI’s Accessibility Frontier

Synthesizing these narratives, AI’s accessibility boom is a multifaceted force reshaping society. Clegg’s pragmatic pivot provides a stabilizing influence, countering the unbridled energy of teen-led disruptions and the intense corporate rivalries at OpenAI. Positively, this could birth innovations like AI-enhanced global education equity or grassroots app economies. Yet, challenges persist: ethical oversights in schools might provoke regulatory backlashes, fragmenting the market and stifling growth.

Broader data from a 2026 World Economic Forum study projects that by 2030, 85% of jobs will involve AI interaction, amplifying the need for balanced approaches. Richer context: Think of the internet’s early days—chaotic forums gave way to moderated communities; AI could follow suit with community-driven guidelines.

For readers, here’s how to engage: Experiment with tools like Hugging Face’s free models for ethical projects, join forums like Reddit’s r/MachineLearning for insights, and support policies via petitions to bodies like the FCC. Ultimately, 2026 marks AI’s maturation—embrace the potential, mitigate the mess.

FAQ

What drives the rise of teen AI slander pages, and how can they be addressed?

These pages stem from accessible generative tools mixed with youthful rebellion, often blurring satire and harm. Addressing them involves integrating AI ethics into school programs and tech companies adding mandatory content warnings.

Why is OpenAI lagging in coding tools, and what might their comeback look like?

OpenAI prioritized consumer AI, allowing specialized rivals like Claude to advance. Their response could involve hybrid models blending natural language with precise coding, potentially launching innovative features by late 2026.

How does Nick Clegg’s Efekta differ from typical AI startups?

Efekta focuses on practical, ethical tools for education and work, eschewing AGI hype for human-centered design, drawing on Clegg’s policy expertise to emphasize societal benefits.

What broader impacts could AI accessibility have on society?

It democratizes innovation but risks amplifying misinformation and inequality; positive outcomes include empowered creators, while negatives might spur new laws like expanded digital privacy protections.

How can everyday users contribute to responsible AI development?

Participate in beta testing with feedback on ethics, support open-source projects, and educate yourself through platforms like Coursera’s AI courses to promote balanced usage.

What do you think—has AI’s accessibility gone too far, or is this just growing pains? Drop a comment, share this post, or subscribe to Datadrip for more unfiltered takes on AI’s twists and turns.

Sources: Wired on Teens’ AI Slander Pages, Wired on OpenAI’s Coding Race, Wired on Nick Clegg’s Pivot, Pew Research on Teen AI Use, McKinsey AI Market Report, GitHub State of the Octoverse, Deloitte AI Augmentation Report, World Economic Forum Future of Jobs.